CRANKIT

Crowd Ranking Kit

How It Works

When faced with several options, CRANKIT has been designed to allow you to obtain the preferences or priorities of a group of people and provides a robust analysis of group opinion. A pollster sets up the poll by identifying the question(s) to be asked and setting out the options (potential answers to the question) that they wish participants to rank. Participants are asked to rank between options via a web link. Their rankings are stored and then, when the poll is closed by the pollster, the participants’ ranks are combined , analysed and fed back to the pollster, who then may choose to share the results page with others.

Getting Ranks

As a pollster, you set up a CRANKIT poll by specifying the question(s) to be asked and setting out the potential options that you wish participants to rank. You then decide whether the poll is:

- Invite-only - in which case you provide the email addresses the people you want to invite;

- Global - in which case a single link is generated that can be circulated and forwarded.

Considerations: Global polls are the easiest to set up and the most flexible (since you do not have pre-specify who gets to vote) but there is no way to stop people voting more than once and there is no way for people to change their minds without voting twice. You also have no record of who has voted (except for the names entered).

Invite-only polls are slightly cumbersome to set up since you need to enter the email address of each participants need, but it does ensure only one vote per invite. It also allows people to change their minds (only their last vote counts). The pollster can see who has voted (once more than 3 people have voted).

In future, the user will also decide if the poll is:

- Private – in which case you as pollster do not see the responses of individual participants*;

- Semi-private – in which case you as pollster see the responses of individual participants but other participants do not. Participants are alerted to the fact that you can view their response; or

- Fully transparent – in which case you and all participants can see the responses of individual participants.

At present (June 2015), only private polls are permitted.

As a participant, you are asked to rank options via a web link and informed whether the poll is invite-only or global and if it is private, semi-private or transparent. Important points to note before completing the poll are that:

- You can rank as many or as few of the options provided as you wish - those you leave unranked are assumed to be tied in last place.

- You can give two or more options the same rank. For instance, if you have strong views about your most preferred option and your least preferred option but have no preferences concerning options in the middle, you can simply give each option in this middle subset the same rank (between the ranks you gave for your most and least preferred options).

- CRANKIT uses these ranks only as an indication of your preferences between options – so there is no need to worry about the actual number you give ranks (for instance ranking a set of five options 1st, 2nd, 4th, 4th, and 5th is essentially the same as ranking those same options 1st, 3rd, 5th, 5th and 7th).

Participant responses are stored until the poll is closed by the pollster or when the target number of participants has been met. CRANKIT then combines the individual ranks based on a published algorithm to give a robust analysis of group opinion (a group ranking).

How individual ranks are combined to give an overall group ranking

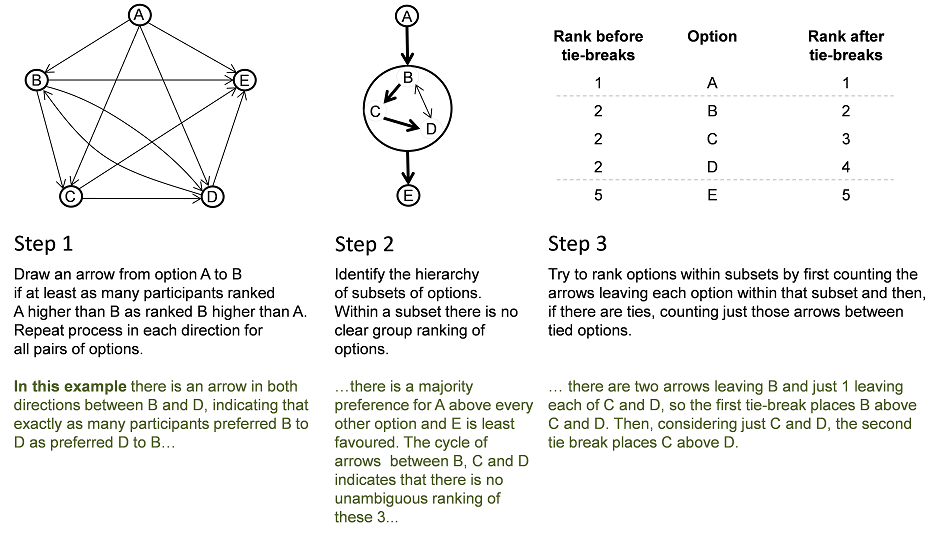

For each and every possible pair of options (A and B say) we see if at least as many participants prefer option A to option B as prefer option B to option A. If so, we draw an arrow from option A to option B. Note this means that if exactly as many participants prefer B to A as prefer A to B, there is an arrow in both directions.

We use the resulting set of arrows to describe group opinion.

If there is a path of arrows from option A to option C but no path from option C to option A, we can say that there is an unambiguous group preference for option A over option C. Things get complicated if, for instance, a majority of participants prefer option A to C, a majority prefer C to B and a majority prefer B to A, forming a cycle of options among which there is no unambiguous group preference. This happens more often than you’d think!

We deal with this by first identifying an order among smaller groups of options where each small group is clearly preferred to the next but where there is no unambiguous ranking among options within a small group. These smaller groups may each include several options or just one.

As a first tie- break, we rank the options within a subset by the number of arrows that leave each option for other options in that subset. If there are still ties at this stage, we count only the arrows between tied options.

Example

*Note that a devious pollster could collude with one participant to infer the preferences expressed by a 3rd participant. We would consider this naughty.